#AI Security

The Technical Anatomy of Model Extraction in 2026 (The Great AI Theft of the Century?)

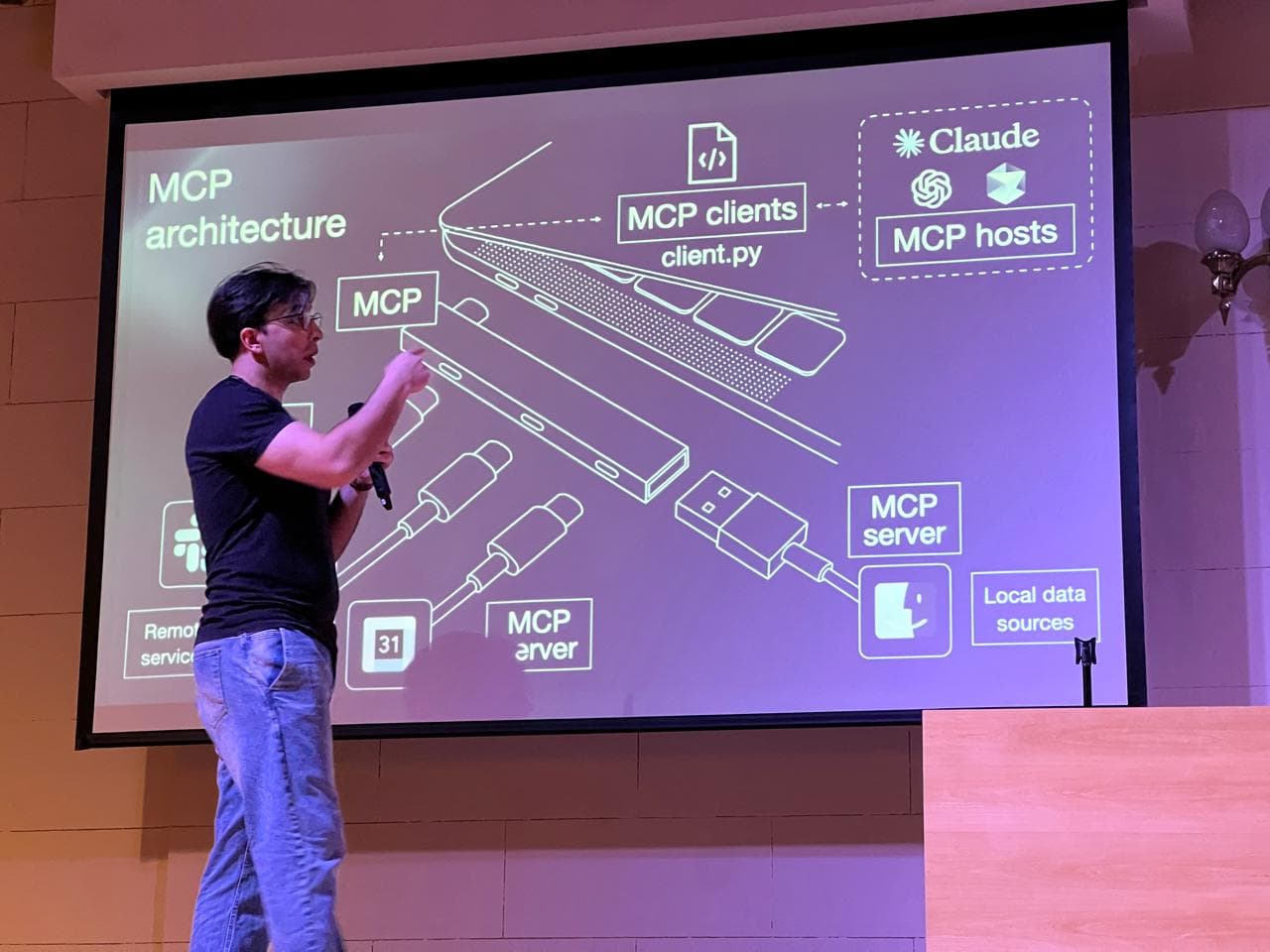

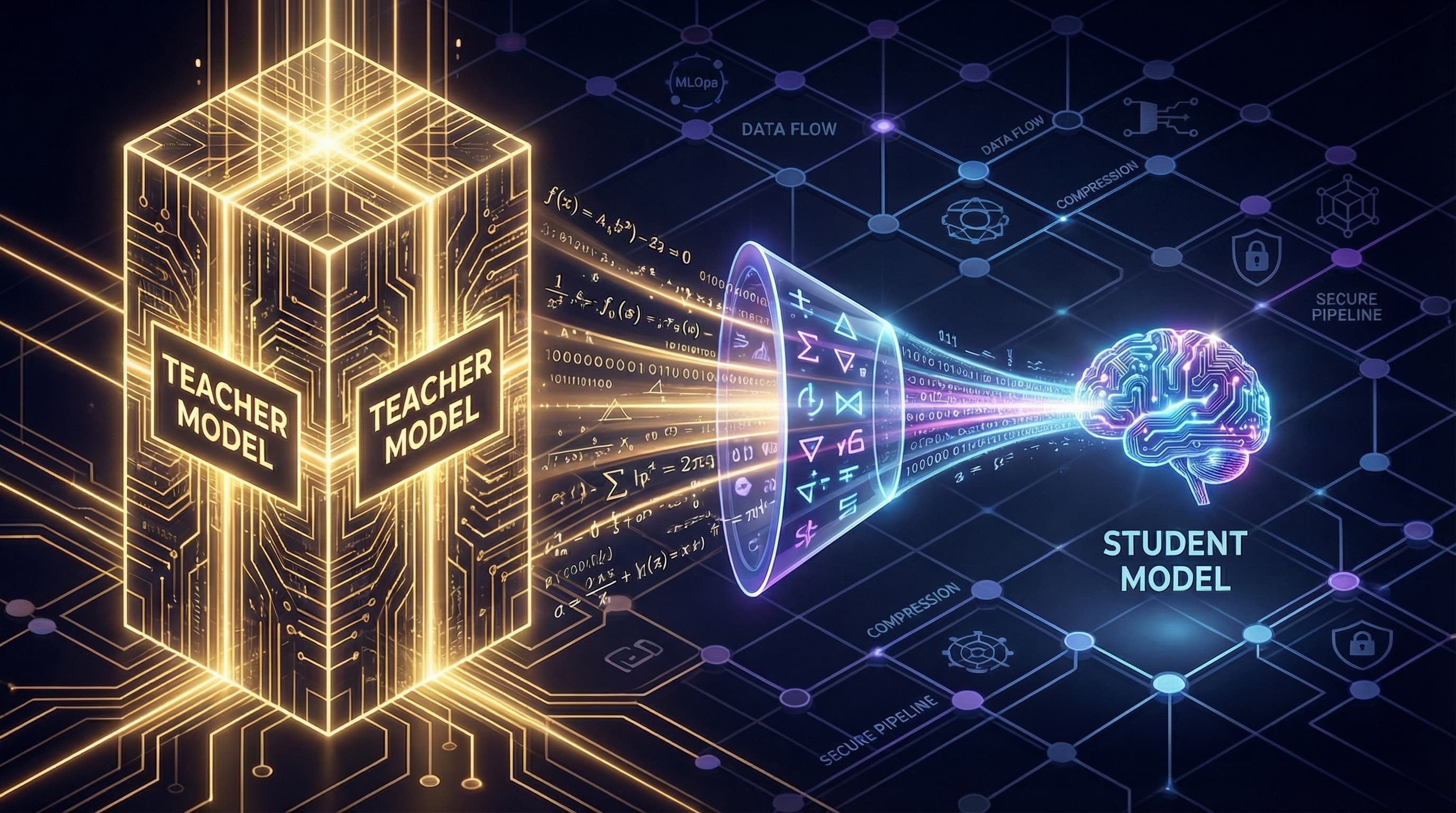

A deep technical dive into Model Extraction attacks. We dissect the mathematics of Knowledge Distillation, logit harvesting pipelines, and the cryptographic failures of LLM watermarking.